Apple named its next-generation version of the Mac operating system High Sierra because it's designed to improve macOS Sierra through several major under-the-hood updates. While most of what's in High Sierra isn't outwardly visible, there are some refinements to existing features and apps like Safari, Photos, Siri, FaceTime, and more. We went hands-on with the High Sierra beta to give MacRumors readers a quick idea of what changes and improvements to expect when the software comes out this fall. Check out the video below to see what's new. For more videos.

Some of the biggest app changes are in Photos, which has a persistent side bar, editing tools for Curves and Selective Color, new filters, options for editing Live Photos, new Memories categories, improved third-party app integration, and improvements to facial recognition, with the People album now synced across all of your devices. Safari is gaining a new autoplay blocking feature for videos, Intelligent Tracking Prevention to protect your privacy, and options for customizing your browsing experience site-by-site, while Mail improvements mean your messages take up 35 percent less storage space. Siri has a more natural voice, just like on iOS 11, and can answer more music-related queries. ICloud Drive file sharing has been added, and in High Sierra and iOS 11, all of your iMessage conversations are saved in iCloud, saving more storage space. When installing High Sierra, it will convert to a new, more modern file system called Apple File System or APFS. APFS is safe, secure, and optimized for modern storage systems like solid-state drives.

Features like native encryption, crash protection, and safe document saves are built in, plus it is ultra responsive and will bring performance enhancements to Mac. APFS is accompanied by High Efficiency Video Encoding (HEVC) which introduces much better video compression compared to H.264 without sacrificing quality.

The other major under-the-hood update is Metal 2, which will bring smoother animations to macOS and will provide developers with tools to create incredible apps and games. Metal 2 includes support for machine learning, external GPUs, and VR content creation, with Apple even providing an external GPU development kit for developers so they can get their apps ready for eGPU support that's coming to consumers this fall. Apple is also working with Valve, Unity, and Unreal to bring VR creation tools to the Mac. MacOS High Sierra will run on all Macs that are capable of running macOS Sierra.

For a more detailed overview of what's included in the update, make sure to. Wow that reads like it auto-converts HFS to APFS. I wonder how it does that? Create new APFS partition, copy from HFS to APFS, reformat HFS partition to APFS, delete partition to end up with one big APFS partition? Similarly, I wonder about the relationship of this and Time Capsule?

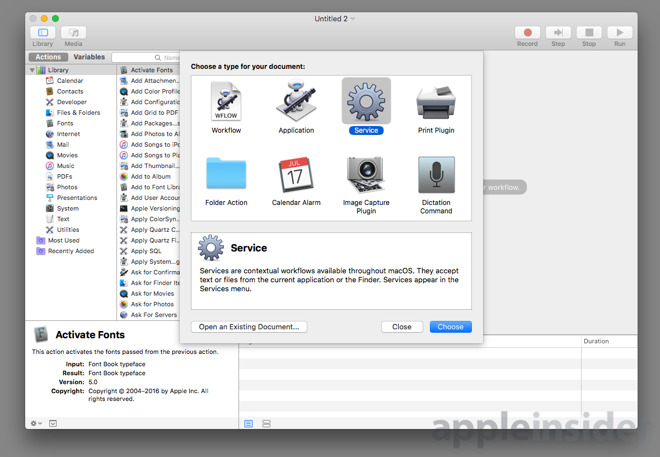

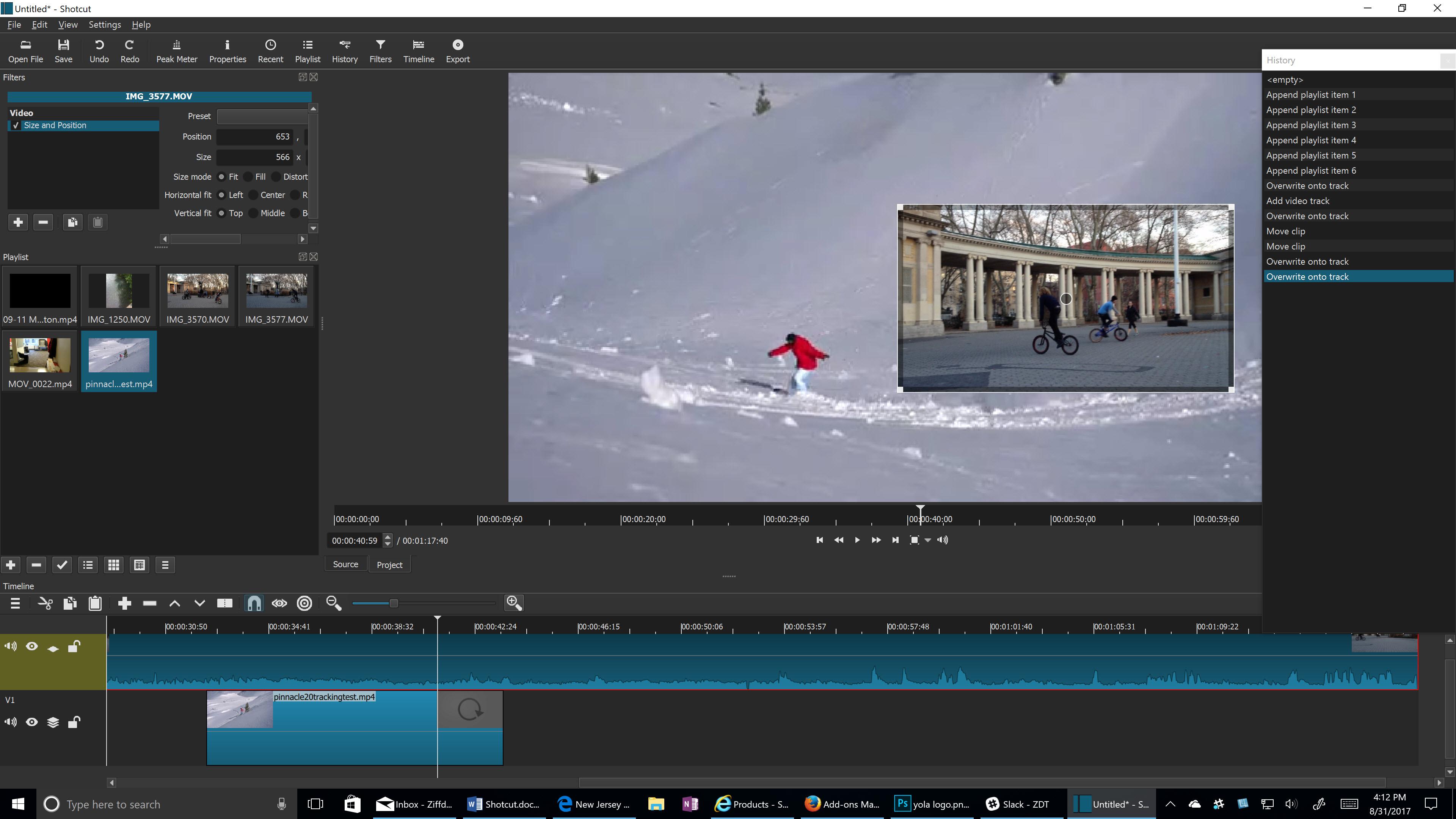

Shotcut is a free, open source and cross-platform video editing software that can be used for YouTube. It handles a wide range of media formats including MP4, MOV, FLV, etc. The interface of Shotcut is full of function but still sleek and intuitive. Apple previewed macOS High Sierra on June 5, 2017, the latest version of the world’s most advanced desktop operating system, delivering new core storage, video, and graphics technologies that pave the way for future innovation on the Mac. MacOS High Sierra offers an all-new file system, support for High-Efficiency Video Coding (HEVC) and an.

Does Time Capsule remain as is or would it be converted to APFS too? Can external HFS drive be auto-updated to APFS using the same approach? Lots of questions.

Edit: lots of people are pointing to this as answers: Thanks to all. Can you expand upon this? And if it is clearly better, why do you think Apple didn't adopt it after all? They were working on support for a long time.I don't know if it's where the OP was going, but one of the things ZFS has that APFS noticeably doesn't, is baked-in error correction and/or checksumming. Having a background task in the kernel slowly (continually or periodically) reading through the entire filesystem and comparing blocks read off the disk with their associated checksums, is huge. If a block goes bad, you find out about it soon, rather than 'uh oh', when you really need it.

With a filesystem that uses 'free' space to store spare copies of blocks, the kernel may be able to recover it on the fly, but even without redundancy, it can flag that the affected file needs to be reloaded from backup (you have those right?) before passing time increases the odds of the backup being overwritten, getting lost, etc. Some sort of systematic kernel-level data integrity checking is the one bit sorely missing from APFS. This is only my opinion, but I believe you are being incredibly dramatic. The sky has not fallen. Even the largest storage configurations for the vast majority of macOS machines are still fairly small in the grand scheme.

If people are keeping their most important data there and not utilizing the iCloud features and/or Time Machine.that's on them for putting all their eggs in one basket. You speak as if you know for a fact that there was no reason for them to omit what you wish they hadn't.when you clearly do not have access to any information to back that claim. Point being.get a grip. A consumer mechanical hard disk will turn up one unrecoverable bit per 12TB of reads. That is listed on the manufacturer's data sheet and it is seen as normal. And that's just the tip of the iceberg.

You can't tell me how many corrupt files you have on your disk. That fact alone should terrify you. Depending on how old your Mac is, how old your data is, and how much data you have. It could be nearly a certainty that you have corrupt files on it. You just have no idea. Ignorance is bliss I guess? Hopefully it's some useless stuff, not something you care about.

ZFS was a path that Apple considered taking until the situation was made problematic by licensing issues. Full feature set of ZFS requires considerable resources - and lots of memory. Obviously things like de-dup features could be turned off and the memory requirements drop considerably. Apple is not currently in the server market, so many of the features of ZFS would be overkill. HFS+ reached maturity long ago and has been feature complete. The APFS still has a rather long feature list of stuff to implement, and by the time it is feature complete.

Hard drives will likely be less and less of an installed base for this technology. Data integrity is important and having built in smarts to know when data has suffered 'bit rot' is valuable - I have yet to run into this issue on my SSD so I am not sure what form it takes. BUT, both the APFS and the SSD technology are both maturing - and I am not sure if the data integrity will be more of a hardware feature set or a software feature set once everything has matured.

Only time will tell, but things are now moving pretty quickly. People keep talking about a brand or a specific file system (ZFS), but I'm talking about a feature: data integrity. Every major OS has a data integrity story except Apple. Microsoft has ReFS, Linux has BTRFS, Ubuntu has ZFS, Solaris has ZFS. Why doesn't Apple have a data integrity story in their new file system?

I can't think of a good reason. Never trust hardware. Hardware deals in analog signaling and analog switching. It lies to you. Application programmers have the luxury of trusting the abstraction. Kernel, driver, firmware, and file system programmers do not. The idea that hardware error checking will save us is crazy.

(I'm on the Mac since the 7100). If you're on the Mac since the 7100 and you have been moving your data forward since then, it has absolutely happened to you. You just have no idea. Data size, transfer count, and time. I personally have songs that have acquired blips, JPEGs that have acquired some weirdness, and video files with errant blocks that didn't used to be there. It happens if you know what to look for.

All modern HDDs and SSDs use error correcting codes (ECC), which makes additional error correction superfluous. I quote myself from above: Never trust hardware. Hardware deals in analog signaling and analog switching. It lies to you. Application programmers have the luxury of trusting the abstraction. Kernel, driver, firmware, and file system programmers do not. The idea that hardware error checking will save us is crazy.

I disagree with your take and understanding of the mater. As someone understanding end to end data checks, the impact is not infinitesimal but real and very measurable. Depending on application, what you are saying is true.

APFS is not there but it is not designed for that goal. It is designed for purely consumer based applications. In this mindset, Apple made decisions (that I mostly understand) saying for the extremely RARE (and it is a rare, infinitesimal as you would say) event for a corrupted file and they will rely on file backups for recovery. Protect the structure of the drive (meta data) and rely on redundant copies (backups) for the user data. If the user cares about their data, they will have backups. From a consumer standpoint, I understand this mindset. If you understand end to end data checks then you understand how much time the CPU spends waiting for data from the disk.

Even on modern very fast PCIe SSDs, the CPU is doing a lot of idling while the data streams in. Modern CPUs are so fast (even the Core M stuff in the MacBooks) that these kinds of workloads are just not a big deal. The idea that backups will save you from file corruption is hilarious. Corruption is systemic, if a user had backups the backups will almost certainly contain the same corruption. If you have no way of identifying its existence, it just moves around the data set until all copies are corrupt. Then you open it, find that it is corrupt, go to your backup, it is also corrupt. Additionally APFS removes the ability to easily make a duplicate of the file.

So you copy a file to try and preserve it, but APFS just makes a thin file that points to the same blocks as the original. So now when one copy is corrupt, both copies are corrupt.

Have fun with that. Corruption in files (bit rot in some cases, hardware issues in others) is much more likely than people seem to think it is. I would bet good money that plenty of folks in this forum (including some arguing with me now) have corrupt files. Unfortunately they have no way to check. Disks are getting bigger, not smaller. The need for data integrity is increasing, not decreasing.

Why is everybody arguing against a feature that is obviously helpful and good for everybody?